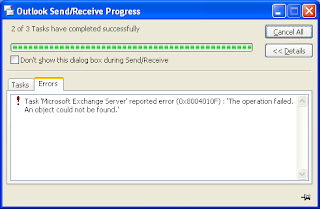

In the meaningless error category, let me present today's effort by Microsoft Outlook:

Tuesday, 1 February 2011

Thursday, 23 April 2009

Making Skype work with PulseAudio in Ubuntu Intrepid and Jaunty

Following my sound issues with Skype on Ubuntu, I did a bit more research and eventually found an excellent how-to article on PulseAudio in the Ubuntu forums. Appendix C explains how to get Skype to work properly and indeed it is a doodle and means there is no need to kill PulseAudio when using Skype! I've tested it in Intrepid and Jaunty and it works a treat. To quote the article, here's how to do it:

Open Skype's Options, then go to Sound Devices. You need to set "Sound Out" and "Ringing" to the "pulse" device, and set "Sound In" to the hardware definition of your microphone. For example, my laptop's microphone is defined as "plughw:I82801DBICH4,0".

You will have to experiment with the different options for "Sound In" until you find the correct one: choose an option, click on "Make a test call" until you find the option that works. On my machine, here's what the option window looks like:

Tuesday, 13 January 2009

Tuning a Wi-Fi Router with Linux

Nowadays, with Wi-Fi broadband routers becoming the de-facto standard in the home, comes a new problem for people who live in cities: interferences between neighbouring wireless networks. This can lead to slow connections or even dropped connections. A few years ago it was not a problem, few people had Wi-Fi routers at home and if you had one your router would work great out of the box. Nowadays, everybody's got a Wi-Fi router at home, whether it be a traditional router or a phone that masquerades as one. Wi-Fi was designed to avoid interferences by being able to work on a number of frequencies and every single router allows you to choose what frequency to use by selecting a channel. In the UK, you will typically have the choice of a channel value between 1 and 13. Just go to the wireless settings area of your router administration page and you should be able to change the channel, as shown on this example from a Netgear router:

That's simple: change the value, ensure it's applied and, depending on the manufacturer, reboot the router. But what is a good value that will ensure a good connection? Well, it depends on your environment. As you want to avoid interferences, this value should be as far as possible from other routers in the range of your equipment. But how do you tell what channel other routers in your area use? The Linux wireless tools come to the rescue, and in particular the one called iwlist. If you have a Wi-Fi laptop running Linux, it will have this utility installed as standard. The basic command we want is:

$ iwlist [interface] scan[ning]

To do a full scan, we need to run it as root so we'll prepend sudo to it. It is not necessary to specify a network interface but you might as well do so to avoid scanning non-wireless adapters. On my laptop, the wireless interface is eth1 so here is what I obtain by running iwlist against it:

$ sudo iwlist eth1 scan

eth1 Scan completed :

Cell 01 - Address: 02:1D:68:4B:6D:F6

ESSID:"BTOpenzone"

Protocol:IEEE 802.11bg

Mode:Master

Frequency:2.412 GHz (Channel 1)

Encryption key:off

Bit Rates:1 Mb/s; 2 Mb/s; 5.5 Mb/s; 6 Mb/s; 9 Mb/s

11 Mb/s; 12 Mb/s; 18 Mb/s; 24 Mb/s; 36 Mb/s

48 Mb/s; 54 Mb/s

Quality=27/100 Signal level=-83 dBm

Extra: Last beacon: 240ms ago

Cell 02 - Address: 00:1D:68:4B:6D:F5

ESSID:"BTHomeHub-954D"

Protocol:IEEE 802.11bg

Mode:Master

Frequency:2.412 GHz (Channel 1)

Encryption key:on

Bit Rates:1 Mb/s; 2 Mb/s; 5.5 Mb/s; 6 Mb/s; 9 Mb/s

11 Mb/s; 12 Mb/s; 18 Mb/s; 24 Mb/s; 36 Mb/s

48 Mb/s; 54 Mb/s

Quality=29/100 Signal level=-82 dBm

Extra: Last beacon: 248ms ago

... and so on

Each Cell section provides the details of a wireless hub in range. For each of them, the line we are interested in is the one that start with the word Frequency. So, if we use grep to filter the data, we get:

$ sudo iwlist eth1 scan | grep Frequency Frequency:2.412 GHz (Channel 1) Frequency:2.412 GHz (Channel 1) Frequency:2.412 GHz (Channel 1) Frequency:2.427 GHz (Channel 4) Frequency:2.442 GHz (Channel 7) Frequency:2.442 GHz (Channel 7) Frequency:2.462 GHz (Channel 11) Frequency:2.442 GHz (Channel 7)

This shows that I am in range of 8 wireless hubs. The one I am using is the fourth one, set to use channel 4. But that's because I changed it yesterday. It used to be configured with its default setting, using channel 11, which was clashing with the one before last. In fact, running the command at different times, it appears that all routers in my area use channels 1, 7 or 11. With a possible set of channels between 1 and 13, there are 5 unused channels between 1 and 7, 3 between 7 and 11 and 2 above 11. So the best choice is halfway between 1 and 7: channel 4. And since I reconfigured the router to use that channel, speed has improved significantly and dropped connections have been a thing of the past.

Now, why router manufacturers don't design their products to be able to scan neighbouring wireless networks at start-up and choose a frequency that doesn't clash with other hubs, I don't know. By any means, leave the ability to power users to explicitly specify the channel but a little bit of automation would go a long way in making Wi-Fi easier for the average home user.

Update

After all this, I had to change the router's channel again earlier, as more access points were switched on during the evening. At one point, a full scan showed a grand total of 24 wireless networks! So I changed my router to channel 13 and it all seems fine so far. Methinks I'll have to do something about improving the range of that router. While I was at it, I also upgraded the firmware so we'll see if it makes a difference.

Thursday, 7 August 2008

Change Control

One of the biggest risk to any IT project is change. The more changes you get during a project, the more difficult it is to deliver it on time and on budget. Letting change happen willy nilly is a bit like going to a bar and asking the barman for a white wine. Then when he's about to pour it, change your mind and ask for a red wine. And when he's about to pour the red wine, change your mind again and ask for a beer: any sensible barman will then stop and ask you to make your mind up before going any further, potentially charging you if you changed your mind after the drink was poured. It's also a sure way to get served very slowly.

IT projects are the same: if you keep changing your mind, you will never deliver and if you change your mind too late in the process, it's going to cost you a lot. This is why a good Change Control Process is essential.

Now, the project I currently work on has had every single aspect of it changed over the past three weeks. I cannot name a single thing that is identical to what it was three weeks ago. We even changed the Change Control Process!

In fact, I'm unfair, there is one thing that hasn't changed: we are still working out of the same floor in the same office. But that's because we're only meant to move offices in October.

Monday, 7 July 2008

Peekaboo Nightmare, PIE to the Rescue

I've been working on the web site of a charity during my spare time for the past few months. Last night, I finally got round to uploading a prototype of a few of the revamped pages. Today I got an email from them saying in essence that they liked the prototype but there were a few quirks. The penny dropped immediately: I had been developing the prototype on my Mac and testing with Firefox, they were looking at it with IE on Windows. So I fired up IE on my work laptop and, lo and behold: my prototype was complete rubbish!

Having assessed the extent of the damage, I wrote back saying I'd work on it but if they downloaded Firefox they could see what it was meant to look like.

So when I got home, I started work on making it presentable in IE. It all took quite a lot of effort and swearing but I got there eventually. So here is what it took:

- The first problem was with clearing floats: IE doesn't like it when the clearing element is empty. Luckily, Position is Everything came to the rescue with this handy article on clearing.

- Then it appears that, IE on Windows has

another spec violation that causes all boxes to expand and enclose all content, regardless of any stated dimensions that may be smaller

, which is incidentally one of the features the above article on clearing relies on. So, if I wanted to hide my clearing elements, not only did I need to set theirheightto zero but theirfont-sizetoo. Maybe the best way will be to properly implement the self-clearing method explained in that same article. - And of course, with all those floats all over the place, I had to come across the peekaboo bug! Luckily, a few strategically placed

widthproperties sorted it but only after a healthy bit of swearing.

Moral of the story: if you ever come across IE bugs and want to keep sane while resolving them, head for PIE before attempting any modification of your code. I used to know this, I just got reminded tonight.

Thursday, 19 June 2008

Web Sharing and PHP on Mac OS-X Leopard

Mac OS-X comes with a version the Apache web server that is configured to allow users of the system to publish their own web pages directly from the Sites directory in their home folder. This is of limited use for the average user but is just great for web developers who can test their work directly, using a real web server. However, there is a glitch: if you upgrade from OS-X Tiger (10.4) to Leopard (10.5), existing users will suddenly get an HTTP error 403 Forbidden when navigating to their web pages. This is because in Leopard, Apache's security is tightened by default.

Apple provide an article that describes how to re-enable access for those users. However, their version will still deny access to sub-directories. So I slightly adapted the shortname.conf file to make it more flexible. Here is my version:

<Directory "/Users/shortname/Sites/*"> Options Indexes MultiViews AllowOverride FileInfo Order allow,deny Allow from all </Directory>

The star (*) at the end of the directory name on the first line ensures that the rule applies not only to the ~/Sites directory but also all sub-directories. The FileInfo value for the AllowOverride option on the third line tells Apache to allow settings override in a .htaccess file in that directory or any sub-directory thus allowing much finer grained control.

After getting this to work, it appears that, although PHP5 is installed, it is not enabled in Leopard's Apache installation. Enabling it is very easy and very well explained at Foundation PHP.

There you go: a full blown web server with PHP support is just what you need to locally test drive you beautiful web creations and you don't even have to install any extra software.

Wednesday, 5 March 2008

Leap Years and Microsoft

Following Leap Day last Friday and the confusion this seemed to generate, it looks like the problem is even worse that originally thought, especially where Microsoft products are concerned. And reading the comments on that Register article, it looks like they are not the only ones.

Via The Register.

Sunday, 2 March 2008

Asus Eee PC, Nokia E65 and WPA Wireless Networks

My home wireless network uses WPA for security. When I received my Asus Eee PC, it could not connect to my wireless network complaining about the shared key being too long, which I found odd because all other devices connected fine. Then when I got my Nokia E65, it couldn't connect either but wouldn't tell me why.

Then, over the weekend and prompted by a friend, I decided to fiddle with the Eee PC and get it to connect to the wireless network. So, in an attempt to humour the machine, I changed the pass phrase on my network to something shorter. An lo and behold, the Eee connected! It could then ping all the machines on the network except the router it was actually connected to. As a result, it couldn't route any traffic outside the network so couldn't get on the internet. Checking the routing tables, everything looked fine. It could resolve any name into an IP address so it had nothing to do with the DNS. I thought there could be something dodgy with DHCP so I re-configured the interface manually... and it worked! Going backwards, I set it back to DHCP and... it worked fine, even though I didn't change anything compared to the first attempt. That's IT for you: sometimes, doing the same thing twice fails the first time and works the second, for no apparent reason.

Now all chuffed by my success with the Eee, I decided to try again with the Nokia E65. I first spent a good 20 minutes trying to find how to set up access points and being defeated by the completely non-intuitive menu system of the S60 operating system that runs on those phones. I then turned to the user manual (yes, I know, RTFM) which only marginally helped because said manual is riddled with mistakes and the relevant menu option mentioned did not exist. So I spent another 10 minutes finding out where the manual was wrong. I eventually found what I wanted and set up my access point. Of course, in keeping with the experience with the Eee, it failed to connect first time. The main difference was that the E65 just closed the browser without explanation rather than tell me what went wrong. But trying a second time it worked fine.

So there you go: shortening the WPA secret key means that all my wireless enabled devices can now connect to my home network (apart from the Nintendo DS but that's because it doesn't support WPA at all). Some might say that a shorter key means my network is less secure. Yes and no: considering the network doesn't advertise its SSID in the first place, that's two pieces of information you need to guess. And then, because I knew I was shortening the pass phrase, I took more time to think of something that would be more difficult to guess.

PS: Nokia, could you please ditch the poor excuse for an operating system called S60 and give use something intuitive and user friendly instead? Now that you've acquired Trolltech, could we have nice Qtopia based phones please?

Friday, 29 February 2008

Leap Year Maths

Today is Leap Day, the 29th of February. Reading this article on The Register earlier and in particular the comments, it looks like the logic behind calculating whether a year is a Leap Year is still fuzzy for some. This is an essential calculation to get right in any software that deals with dates (that is, most of them), so here are all the gory details.

Sunday, 10 February 2008

Subversion on Ubuntu

The Cunning Plan

One of the planned functionality for my new silent server was to offer a Subversion repository for code and documents that I could access via WebDAV. By doing this, I would be able to save important documents on the server and benefit from version control. Version control is essential for computer code but can also be very useful for other types of documents by allowing you to have multiple versions, revert to an old version, etc. So without further ado, let's get into the nitty gritty of getting it to work on Ubuntu 7.10.

Resources

- Version Control with Subversion, the book about Subversion, which you can download for free,

- Install Subversion with Web Access on Ubuntu by the How-To Geek.

Installing the software packages

We need to install Subversion, Apache and the Subversion libraries for Apache. As this is all part of the standard Ubuntu distribution, it is extremely easy.

sudo apt-get install subversion apache2 libapache2-svn

And that's it, you have a working Subversion installation! It doesn't do very much yet so we need to create repositories for documents.

Subversion Repositories

Subversion is extremely flexible in the way it deals with files and directories. There are a number of standard repository layout that are well explained in the book. Then there's the question of whether you'd rather put everything in the same repository or split it. This is also well explained in the book. My rule of thumb is to only create multiple repositories if you need to keep things completely separate, such as having one repository per customer, or if you need different settings. In my case, I need to store code and documents, the code being accessed via an IDE that competely supports Subversion, the documents being accessed as a network folder. Both usage scenarios require different settings in Apache so this is a typical case where several repositories are a good idea. However, there is no need for splitting the code or the document repositories further. You can create your repositories where you want in the file system, I chose to create them in a specific top level directory.

$ sudo mkdir /svn $ sudo svnadmin create /svn/docs $ sudo svnadmin create /svn/dev $ sudo chown -R www-data:www-data /svn/*

The svnadmin command created a complete structure:

ls /svn/dev conf dav db format hooks locks README.txt

The last command changes ownership recursively of both repositories so that the Apache instance can read and write to them. This is essential to get WebDAV to work.

Configuring Apache

Next, we need to configure Apache so that it can provide access to both repositories over WebDAV.

$ cd /etc/apache2/mods-available $ sudo vi dav_svn.conf

In this file, I defined two Apache locations, one for each repository, with slightly different options. As this is a home installation, I don't need authentication. If you want to add authentication on top, check the How-To Geek article. The extra two options on the documents location are meant to enable network clients that don't support version control to store files. See Appendix C of the Subversion book for more details. Note that if you wanted to use several development repositories, such as one for each of your customers, you could replace the SVNPath option with SVNParentPath and point it to the parent directory.

<Location /dev> DAV svn SVNPath /svn/dev </Location> <Location /docs> DAV svn SVNPath /svn/docs SVNAutoversioning on ModMimeUsePathInfo on </Location>

The last thing to do is to restart Apache:

$ sudo /etc/init.d/apache2 restart

Subversion Clients

Now that the Subversion server is working, it's time to connect to it using client software. For development, I use Eclipse for which there is the Subclipe plugin. It all works as expected. For the documents repository, Apple OS-X has built-in support for WebDAV remote folders. Go to the finder, select the menu Go > Connect to Server and type in the folder's URL in the dialogue box that appears, in my case http://szczecin.home/docs. It's also possible to browse both repositories using a web browser, which is a good way to provide read-only access.

Conclusion

That was a very short introduction to Subversion on Ubuntu. There's a lot more than this to it, a lot of it in the Subversion book. In particular, you can add authentication and SSL to the repositories once they are available through WebDAV. There are also a lot of options as far as Subversion clients are concerned and you can find free client software for every operating system you can think of.

Saturday, 26 January 2008

Playing with KML

The Idea

When I came back from holidays, I thought about creating a map of the journey in a way that I could share with friends. So I decided to try to do that using KML, the description language used by Google Earth. Google has tutorials and reference documentation about KML that got me started. In practice, what I wanted to do was very simple: lines showing the route and location pins showing the places I had visited on the way.

Document Structure

KML is a fairly straightforward XML dialect and the basic structure is very simple:

<?xml version="1.0" encoding="UTF-8"?>

<kml xmlns="http://earth.google.com/kml/2.2">

<Document>

Content goes here

</Document>

</kml>

Route

For the route, I wanted lines that would roughly follow the route I had taken. To do this in KML is simple: add a Placemark tag containing a LineString tag that itself contains the coordinates of the different points on the line. So a simple straight line from London to Hamburg looks something like this:

<Placemark>

<name>Outward flight</name>

<LineString>

<altitudeMode>clampToGround</altitudeMode>

<coordinates> -0.1261270000000025,51.50896699999998

9.994621999999991,53.55686600000001

</coordinates>

</LineString>

</Placemark>

The name tag is not essential but this is what will show in the side bar in Google Earth so it's better to have one. The clampToGround value in the altitudeMode tag tells Google Earth that each point on the line is on the ground so you don't have to specify the altitude in the coordinates. In the coordinates tag is a space separated list of coordinates. In this case, each point is specified by a longitude and a latitude separated by a comma, the altitude being implied. Longitude is specified with positive values East of the Greenwich meridian and negative values West of it. Latitude is specified with positive values North of the equator and negative values South of it.

That's good but another thing I wanted to do was specify different colours for the different types of transport I used during my holidays. KML has the ability to define styles that you can then apply to Placemark tags. This is done by adding a number of Style tags at the beginning of the document. You then have to specify the style using a styleUrl tag. Applying this to the simple line above, we get:

<Style id="planeJourney">

<LineStyle>

<color>ff00ff00</color>

<width>4</width>

</LineStyle>

</Style>

<Placemark>

<name>Outward flight</name>

<styleUrl>#planeJourney</styleUrl>

<LineString>

<altitudeMode>clampToGround</altitudeMode>

<coordinates> -0.1261270000000025,51.50896699999998

9.994621999999991,53.55686600000001

</coordinates>

</LineString>

</Placemark>

Note that the value for the color tag in the LineStyle is the concatenation of 4 hexadecimal bytes. The first byte is the Alpha value, that is how opaque is the colour, in our case the value ff specifies it is completely opaque. The next three bytes represent the Red, Green and Blue values, in our case a simple green.

Places

For places, I wanted a simple marker that showed more information when you clicked on it. This is also very simple in KML: a Placemark tag containing a name, a description and a Point tags. The name needs to be a simple string but the description can contain a full blown HTML snippet inside a CDATA node so you can include images, links and all sorts of things. Note that the box that will pop up when you select the place mark is quite small so don't overdo it. Here is an example for London:

<Placemark>

<name>London</name>

<description><![CDATA[<p><img src="some URL" /></p>

<p><a href="some URL">More photos...</a></p>]]></description>

<Point>

<coordinates>-0.1261270000000025,51.50896699999998,0</coordinates>

</Point>

</Placemark>

Note that the coordinates here include the altitude as well as the longitude and latitude. You could also apply a style to the location place marks, in the same way as was done for the lines.

KML or KMZ

Once you've created your file, you need to save it with a .kml extension. You can then open it in Google Earth. When you're happy with it, you can also zip it and rename it with a .kmz extension: Google Earth will be able to load it as easily but the file will be smaller. Both files can also be used with Google Maps and can be shared online. So here is my complete holiday map built with KML.

Tips and Tricks

Getting the exact coordinates of a particular place can be cumbersome. To make it easy, just find the place in Google Earth, create a temporary place mark if there is none you can use, copy it with the Edit > Copy > Copy menu option and paste it in your text editor: you'll get the KML that defines the placemark, with exact coordinates.

The clampToGround option in the altitudeMode tag specifies that the points you define in the coordinates are at ground level. The line between two points will be straight, irrespective of what lays between said points. So if you have a mountain range in between, you will see your line disappear through the mountains. To correct this, you should insert intermediary points where the highest points are located. This is why on my map the flight between Izmir and Paris has intermediary points so that the line can go past the Alps without being broken.

If you want to do more complex stuff, be careful that Google Maps only supports a subset of KML. Of course, the whole shebang is supported by Google Earth.

Conclusion

KML is a nice and simple XML dialect to describe geographical data and share it online. It certainly beats writing postcards to show your friends and family where you've been and it doesn't get lost in the post.

Silent Server

A few months ago I set up a home server using an old box. Unfortunately that old box died shortly afterwards. Furthermore, it was quite noisy as it had been originally spec'ed as a high end workstation. So I went in search of a replacement, with a view to have a server that would be as silent and energy efficient at possible.

In this quest, I came across VIA, a Taiwanese company that specialises in low power x86 compatible processors and motherboards. You can get most of their hardware in the UK from mini-itx.com. But I'm not good at building a box from scratch so I really needed something already assembled. I found that at Tranquil PC, a small company based in Manchester. Here is the configuration I ordered from them:

- An entry T2e chassis with DVD-R drive, colour black.

- A VIA EN15000 motherboard. I choose this one as it is the only one that they offer that comes with a Gigabit Ethernet port and the new 1.5GHz VIA C7 processor, which is one of the most power-efficient.

- 1GB RAM. Experience tells me that this is more than I need but having extra RAM should enable the machine to take on more tasks in the future.

- A 100GB 2.5" HDD. I could have gone for a larger 3.5" HDD but I don't currently need the extra space and laptop drives are significantly more energy efficient and silent than desktop ones.

I received my T2e a week or so later. Unfortunately, it had been damaged in transit and the DVD drive was not working properly anymore. The support people at Tranquil PC were very nice and very efficient and arranged for the machine to be collected and sent back to them. It came back a week later in full working order.

I replicated the original install that I had done on the old server. Having done it once, it went very smoothly, everything working first time. The obvious difference from the start was how little noise the T2e makes. In fact, the only audible noise came from the DVD drive spinning the installation CD. Otherwise, it's as if the machine was switched off. Impressive! And it looks really cool with the blue glow coming out of the front panel. As I have a plug-in energy meter, I decided to check how much power this machine drew. So, once the installation was finished and the machine was up and running, I restarted everything with the meter in between the wall socket and the PC's plug. Results:

- Max power consumption when starting up: 30 Watts.

- Standard power consumption once in operation: 25 Watts.

In other words, this machine consumes about the same as a small standard light bulb without ACPI enabled. Once I've enabled ACPI and tweaked it somewhat, I should manage to make it consume even less.

This proves that a server doesn't have to be a big power hungry and noisy box, it can be a small machine that is so silent you forget it's switched on. There are currently few suppliers for that sort of hardware but my guess is that it will become more common. In the meantime, head to Tranquil PC to find one of those.

Saturday, 29 December 2007

Photographic Metadata

When I first started with an SLR camera, some 13 years ago, all camera magazines had the same advice for beginners: to improve your pictures, write down all the settings you used, such as aperture or shutter speed, so that you can go back to this information once you have the prints and understand why they came out the way they did. As a result, a serious photographer would always have a small notebook with him to write all this down. It was quite a time consuming process but essential for who wanted to improve. In this age of digital photography, it would seem sensible for the camera to store this information with the picture so that you can retrieve it later. And indeed they do, in metadata called EXIF data that is embedded in the image file. Software like Photoshop is able to read EXIF data but it's a bit overkill to fire Photoshop just to look at this data. And it would also be nice to be able to write scripts based on it, such as a script that selects all pictures taken at a particular focal length.

Such a tool exists: it's called, quite simply, ExifTool. The tool is written in Perl so should work on any system that has Perl installed. There is a package for Mac OS-X that makes it really trivial to install. A proper install on Linux is slightly more convoluted so here's how to do it on Ubuntu:

- Download the latest version from the web site, in my case version 7.08;

- Extract the content of the file:

$ tar -xzf ./Image-ExifTool-7.08.tar.gz

- Install the Perl libraries so that they can be used by other Perl scripts:

$ cd ./Image-ExifTool-7.08 $ perl Makefile.PL $ make $ make test $ sudo make install

- Install the main script:

$ sudo cp exiftool /usr/local/bin

Alternatively, you can use ExifTool directly from the directory where you extracted it if you just want to try it out. Using ExifTool in the command line is very easy, just call:

$ exiftool myfile.jpg

And it will output all the metadata tags it knows about. There are a number of options available, in particular, you can select what tags you want to see. It's all very well explained in the man page. And if you run exiftool without arguments, it will actually display said man page. So what sort of fun stuff can we do now? Here is an example that selects all the files with a .JPG extension in the current directory and below that are photographs taken with a focal length of 105mm:

$ for f in `find . -name "*.JPG"`; do > if [ -n "`exiftool -FocalLength $f | grep '105.0mm'`" ]; then > echo $f > fi > done

Note that ExifTool formats its output such that it prints out the name of the tag followed by a colon and the value. If you want to strip that name and only keep the value, you can do something like this:

$ exiftool -FocalLength myfile.jpg | sed 's/^[^:]*: //'

An interesting application, if you have a GPS receiver is to combine the GPS trace log with the EXIF time information to geo-tag your photographs. There are a number of links on the ExifTool web site that point to such utilities. Once geo-tagged, online photo sites like flickr will use this information to position the pictures on a map. Or more simply, to come back to what I was talking about at the beginning of this article, you could compare basic shot settings between pictures to understand why one is better than another.

Note that there are other tools than ExifTool to do this, some of them offer a graphic front-end that may be easier to use for those who are not comfortable with the command line, but ExifTool is by far the most complete and powerful. Just google for exif reader

if you want to find other options.

Friday, 21 December 2007

Zend Framework on XAMPP

I am currently experimenting with the Zend Framework, using XAMPP on a Windows laptop and following Rob Allen's excellent tutorial. With a default installation of XAMPP, you get a nasty Error 500 whenever you test your system. This is because XAMPP doesn't enable the Apache mod_rewrite extension by default. So here's how to do it. Find the Apache configuration file, called httpd.conf, which on my install is in C:\xampp\apache\conf and uncomment the following line:

LoadModule rewrite_module modules/mod_rewrite.so

Restart XAMPP and it should all work.

Friday, 30 November 2007

Hidden Cost of Viruses

Computer viruses can cost you time and money, that's obvious: if your computer gets infected by a nasty virus, it can take ages to clean it, sometimes even requiring you to completely re-install your operating system from scratch. But there are other hidden costs to viruses and they can even cost you when your computer doesn't get infected. I have been discovering one of those costs on my new contract. I got given a nice laptop running Windows XP Pro (Vista? Who said Vista?) complete with corporate security software. And that's where the problem is: the virus scanner is configured to do a full scan every time I switch on the computer. So the routine in the morning is simple: arrive in the office, plug in the laptop, switch it on and go get a coffee. Then wait some more until it's finished thrashing the hard disk. End result: 15 minutes every morning.

Thursday, 22 November 2007

Gmail Spam Filter

Gmail used to have the best spam filter ever: I would never get spam in my inbox. In fact, until a few weeks ago I would have been unable to say when was the last time I had pressed the Report Spam

button. Unfortunately, over the past few weeks, I've been clicking on that button more and more, the last time being 5 minutes ago. I only get a few a day at the moment but the worrying thing is that they are all email that look like they should be blocked by the most brain dead of spam filters. Is something broken in the Googleplex? In their relentless attempts at blocking the more devious spam messages, did they accidentally break something in the code that was blocking the simple ones?

Saturday, 3 November 2007

DHCP and Dynamic DNS on Ubuntu Server

The cunning plan

I have broadband Internet at home and to connect to the outside world I use a Wi-Fi ADSL router. This router also acts as a DHCP and DNS server. The DHCP function is what allows any machine I connect to my home network to dynamically obtain an IP address. The DNS function is what resolves names into IP addresses, for example, the DNS will tell you that www.blogger.com is really called blogger.l.google.com and its address is 72.14.221.191. This is all well and good: when I switch on one of my computers and let it connect to the network, it will get its IP address from the router, which will also tell it to use the same router for names service queries. The router itself knows to delegate requests to my ISP's DNS so the new computer on the network has full access to the Internet. If I connect a second computer on the network, the same happens and both can have access to the Internet at the same time. Great! However, they don't know anything about each other. If I connect the two machines called nuuk and helsinki to my network, the DNS server is unable to tell either what the address of the other one is. This is because the DNS in the router is fairly basic and doesn't know to update its database when a new machine gets allocated an address by the DHCP service. Basically, we'd want a DHCP server that can communicate with the DNS server and tell it oy, I've got a new machine on the network, here's its name and the address I just allocated to it

when a new machine comes online.

So what's a geek to do? Set up his own DHCP and DNS server obviously! And make them talk together. Luckily, I have an old workstation that I haven't used for many years and that I was planning to throw away: it's a bit dated but it should be exactly what I need for this. Then my recent experience with Ubuntu suggests that the recently released Ubuntu 7.10 Gutsy Gibbon Server Edition might be exactly what I need for the job. So let's get started.

The hardware

I said I had this dated workstation lying around. It's an 8 year old piece of kit that was originally built to run Red Hat Linux. It wasn't very good at it at the time because Linux was not quite ready for the desktop at the time but it was a wicked piece of kit at the time:

- CPU

- Dual 666 MHz Pentium III (Coppermine)

- Memory

- 256 Mb

- Storage

- 10 Gb SCSI hard disk: I got a SCSI controller rather than the cheaper IDE because I wanted to connect my SCSI negative scanner to it

There's plenty of horsepower for what we want to do, more than enough storage and the memory should be fine if the operating system we install on it is lightweight enough. This is where the Server Edition of Ubuntu comes into play: it's meant for server hardware and doesn't have the nice but memory hungry desktop front-end, it's all command line driven. No glitz, just useful stuff. This means that even the latest version of Ubuntu Server should be able to run comfortably within 256 Mb and there should be no need to fall back on an older version of the operating system.

Preparation

Before we start, there is a bit of planning to do. As the new server will be the authority in terms of assigning IP addresses on the network, it needs to have its own address fixed. We also need to decide on a range of addresses to allocate through DHCP. As the router is currently using the address 192.168.1.254, it makes sense to leave it as it is. Here is the network configuration I am aiming for:

- ADSL router

- 192.168.1.254

- New DHCP and DNS server

- 192.168.1.253 and I'll call it

szczecin

- DHCP address range

- From 192.168.1.100 to 192.168.1.200

- Domain name

- home: no need to have an official domain name and in fact it's probably better if it's not something that could be a valid Internet domain

One thing I did before installing anything and that you may want to do as well is boot the new server with the desktop Ubuntu Live CD just to check that all the hardware is supported. The server edition is not a live CD so it doesn't give you the opportunity to check that before going ahead.

Installing Ubuntu

Let's plug everything together first: a monitor, a keyboard, no need for a mouse as it's all command line, power and VGA cables. And we might as well connect it to the network immediately so we'll need a network cable as well.

Start the machine, put the CD in the drive and follow the instructions. As usual with Ubuntu, it's quite easy. There are only a few things to be careful about:

- When it asks how to partition the disk, choose the automatic option using the whole disk.

- When it gets to network setup, cancel the DHCP client setup and configure the network manually. Give it the IP address chosen above and a name. When it asks for a DNS server, put its own address.

- Don't forget to select DNS in the list of additional services you want to install. I would suggest you also install the SSH server to that you can connect to the machine remotely.

At the end of the installation, the machine pops the CD out and asks you to confirm a restart. No nice funky Ubuntu logo when it restarts, it's all text and you are faced with a command line login prompt. If you want to keep working from the console you can, or you can just connect from any other machine connected to the same network using SSH, provided you installed the SSH server obviously. So let's login to our new server.

Configuring a simple static DNS

The first step is to configure a simple static DNS service that is able to resolve names for the router and the new server. Ubuntu 7.10 comes with BIND 9.4.1 as a DNS server and I have used O'Reilly's DNS and BIND book as my reference. The copy I have is the 3rd edition rather than the 5th but it is more than adequate for my purpose.

The very first task is to make sure we have the necessary basics in /etc/hosts:

127.0.0.1 localhost 192.168.1.253 szczecin.home szczecin 192.168.1.254 gateway.home gateway

Then we need to reproduce that in the BIND configuration. The first task is to find where the BIND configuration files are. On Ubuntu, you will find them in /etc/bind with a modular default set of files:

$ ls /etc/bind db.0 db.127 db.255 db.empty db.local db.root named.conf named.conf.local named.conf.options rndc.key zones.rfc1918

The main file, named.conf is constructed in such a way that for most simple installations, you should only have to change named.conf.local, which is exactly what we are going to do. But first, we need to create our database files. Let's start with the forward lookup, which we will name db.home

home. IN SOA szczecin.home. admin.email.address. (

1 ; serial

10800 ; refresh (3 hours)

3600 ; retry (1 hour)

604800 ; expire (1 week)

86400 ; minimum (1 day)

)

home. IN NS szczecin.home.

localhost.home. IN A 127.0.0.1

szczecin.home. IN A 192.168.1.253

gateway.home. IN A 192.168.1.254

The first entry specifies the Start Of Authority, identifying that our server is the best source of information for this zone. The admin.email.address. bit can be any admin email address you want to advertise, with the @ sign replaced by a dot. It doesn't have to be a valid address if you don't want it to be. The second entry identifies this machine as the name server for this zone. If you had multiple servers, you'd need one line per server. The following lines reflect what we have in /etc/hosts.

After that, we can work on the reverse lookup file, which will be named db.192.168.1:

1.168.192.in-addr.arpa. IN SOA szczecin.home. admin.email.address. (

1 ; serial

10800 ; refresh (3 hours)

3600 ; retry (1 hour)

604800 ; expire (1 week)

86400 ; minimum (1 day)

)

1.168.192.in-addr.arpa. IN NS szczecin.home.

253.1.168.192.in-addr.arpa. IN PTR szczecin.home.

254.1.168.192.in-addr.arpa. IN PTR gateway.home.

This file just defines the opposite mapping. The first two entries follow the same format that in the other file. Note how the IP addresses are back to front. The last two entries use the PTR record type rather than the A record type. We now need to declare those two database files in the BIND configuration. To do this, we just add the following to the end of the named.conf.local file:

zone "home" in {

type master;

file "/etc/bind/db.home";

};

zone "1.168.192.in-addr.arpa" in {

type master;

file "/etc/bind/db.192.168.1";

};

Note the semi-colons all over the place in the file, BIND doesn't like it if you forget them. We just need to restart the DNS for it to pick up the configuration:

$ sudo /etc/init.d/bind9 restart

If it doesn't start properly, the best way to identify what's wrong is to go have a look at the system log files. On Ubuntu, the DNS messages will be in /var/log/daemon.log. One last thing to do before we test our setup is to update the local resolver configuration by modifying /etc/resolv.conf. Remove all lines that start nameserver so that the local resolver automatically sends requests to the local BIND instance irrespective of the IP address of the machine. We should end up with a file that contains a single line:

domain home

And we can now use nslookup to verify that it's all working:

$ nslookup szczecin Server: 127.0.0.1 Address: 127.0.0.1#53 Name: szczecin.home Address: 192.168.1.253 $ nslookup szczecin.home Server: 127.0.0.1 Address: 127.0.0.1#53 Name: szczecin.home Address: 192.168.1.253 $ nslookup gateway Server: 127.0.0.1 Address: 127.0.0.1#53 Name: gateway.home Address: 192.168.1.254 $ nslookup localhost Server: 127.0.0.1 Address: 127.0.0.1#53 Name: localhost.home Address: 127.0.0.1

Installing the DHCP server

My reference for installing the DHCP server was an excellent article aimed at the Debian distribution by Adam Trickett. As Ubuntu is based on Debian, I didn't have much to change. In its default state, an Ubuntu installation doesn't include a DHCP server so we need to add it:

$ sudo apt-get install dhcp3-server

This will install the ISC DHCP server. It will ask you to insert the Ubuntu CD in the drive so just do so. It will then attempt to start the new DHCP server and fail saying that the configuration is incorrect, which is to be expected. Configuration files for this server can be found in /etc/dhcp3 and the one we are interested in is dhcpd.conf. Move the default version out of the way by renaming it and we will start anew with an empty file. Here is what I have on my system, based on Adam's article. I highlighted the lines that tell the DHCP server to update the DNS and what key file to use.

# Basic stuff to name the server and switch on updating

server-identifier 192.168.1.253;

ddns-updates on;

ddns-update-style interim;

ddns-domainname "home.";

ddns-rev-domainname "in-addr.arpa.";

# Ignore Windows FQDN updates

ignore client-updates;

# Include the key so that DHCP can authenticate itself to BIND9

include "/etc/bind/rndc.key";

# This is the communication zone

zone home. {

primary 127.0.0.1;

key rndc-key;

}

# Normal DHCP stuff

option domain-name "home.";

option domain-name-servers 192.168.1.253;

option ip-forwarding off;

default-lease-time 600;

max-lease-time 7200;

# Tell the server it is authoritative on that subnet (essential)

authoritative;

subnet 192.168.1.0 netmask 255.255.255.0 {

range 192.168.1.100 192.168.1.200;

option broadcast-address 192.168.1.255;

option routers 192.168.1.254;

allow unknown-clients;

zone 1.168.192.in-addr.arpa. {

primary 192.168.1.253;

key "rndc-key";

}

zone localdomain. {

primary 192.168.1.253;

key "rndc-key";

}

}

Now that we've done that, we need to modify the DNS configuration so that it can accept the changes. But first, a quick note on the /etc/bind/rndc.key file. This file is automatically created when you install DNS on Ubuntu and should be fine for your installation. It is a key file that authenticates the DHCP server to the DNS server so that only the DHCP server is allowed to send updates. You don't want random people to be able to update your DNS database. Having said that, we need to modify the DNS configuration to accept the updates so back to /etc/bind. And while we are at it, let's have a look at this key file.

key "rndc-key" {

algorithm hmac-md5;

secret "some base 64 encoded secret key";

};

Note the name of the key on the first line, it may vary from one distribution to another so make a note of it. Then we need to add the control information to the DNS configuration. Rather than update named.conf, I decided to add the relevant code to the end of named.conf.options: it feels like the right place to put it but I suspect it doesn't really matter. So add this to the end of the file:

// allow localhost to perform updates

controls {

inet 127.0.0.1 allow { localhost; } keys { "rndc-key"; };

};

Then we need to modify the zone definitions in the named.conf.local file. Here is what it looks like with changes highlighted:

zone "home" in {

type master;

file "/etc/bind/db.home";

allow-update { key "rndc-key"; };

notify yes;

};

zone "1.168.192.in-addr.arpa" in {

type master;

file "/etc/bind/db.192.168.1";

allow-update { key "rndc-key"; };

notify yes;

};

include "/etc/bind/rndc.key";

We're nearly there. The changes we just made mean two things: the DNS server needs to be able to update the content of the /etc/bind directory and the DHCP server needs to be able to read the key file /etc/bind/rndc.key. By default on Ubuntu, this won't work as the permissions around those files are fairly stringent. So let's change them. As the BIND configuration directory belongs to root:bind, we just need to give write access to the group for BIND to be able to write to it. To give the DHCP server access to the key file, the right thing to do would be to add the dhcpd user to the bind group but we can also make the file readable to everybody. Yes this is less secure but will be fine for a home installation.

$ sudo chmod g+w /etc/bind $ sudo chmod +r /etc/bind/rndc.key

Now we just need to start DHCP and restart DNS.

$ sudo /etc/init.d/dhcp3-server start $ sudo /etc/init.d/bind9 restart

Error messages will be in the same place as before if the services don't start properly. If all starts as exected, it's now time to go to the administration interface of the router and disable DHCP. We should not need it anymore.

Booting the clients

The proof of the pudding is in the eating and the proof of installing a server is in starting a number of clients to use the service. The first machine I tried with was my Ubuntu laptop called nuuk. To see what happens, tail the log file. You should see something like the following appear:

Nov 3 15:14:02 szczecin dhcpd: DHCPDISCOVER from 00:12:f0:1e:f4:79 via eth0 Nov 3 15:14:03 szczecin dhcpd: DHCPOFFER on 192.168.1.102 to 00:12:f0:1e:f4:79 (nuuk) via eth0 Nov 3 15:14:03 szczecin named[4771]: client 127.0.0.1#32773: updating zone 'home/IN': adding an RR at 'nuuk.home' A Nov 3 15:14:03 szczecin named[4771]: client 127.0.0.1#32773: updating zone 'home/IN': adding an RR at 'nuuk.home' TXT Nov 3 15:14:03 szczecin dhcpd: Added new forward map from nuuk.home. to 192.168.1.102 Nov 3 15:14:03 szczecin named[4771]: client 192.168.1.253#32773: updating zone '1.168.192.in-addr.arpa/IN': deleting rrset at '102.1.168.192.in-addr.arpa' PTR Nov 3 15:14:03 szczecin named[4771]: client 192.168.1.253#32773: updating zone '1.168.192.in-addr.arpa/IN': adding an RR at '102.1.168.192.in-addr.arpa' PTR Nov 3 15:14:03 szczecin dhcpd: added reverse map from 102.1.168.192.in-addr.arpa. to nuuk.home. Nov 3 15:14:03 szczecin dhcpd: Wrote 4 leases to leases file. Nov 3 15:14:03 szczecin dhcpd: DHCPREQUEST for 192.168.1.102 (192.168.1.253) from 00:12:f0:1e:f4:79 (nuuk) via eth0 Nov 3 15:14:03 szczecin dhcpd: DHCPACK on 192.168.1.102 to 00:12:f0:1e:f4:79 (nuuk) via eth0

If you don't see the message about updating the forward and reverse maps, it may be that your client machine is not configured to send its name to the DHCP server. For this to work on Ubuntu, you should have the following line in /etc/dhcp3/dhclient.conf:

send host-name "<hostname>";

Next on the list is my Apple PowerMac G5 called helsinki. A simple restart and it works like a charm: I can ping hesinki from nuuk or szczecin and the other way round, all machines can access the Internet, great! While we're talking about computer names, if you don't know how to change the machine's name under OS-X, here's how.

The next test is with my work's Windows XP laptop. All goes well: the Windows box gets an IP address and I can ping it from the other machines. However, I can't ping from the Windows box to the other ones unless I use the fully qualified domain name: that is I can ping nuuk.home but not nuuk. It looks like Windows ignores the domain information sent by DHCP. This is not a major problem as everything else works fine. I vaguely remember something about network settings on Windows that you have to change but. I'll have a look see if I can remember where it was when I can.

The final test is to start my Solaris Express laptop called mariehamn. It gets its IP address fine but doesn't seem to send its name to the server. So it can't be pinged. Everything else works though: it can ping all of the other machines, get to the Internet, etc. I suspect I need to find the equivalent of the /etc/dhcp3/dhclient.conf file on Solaris and change it so that it sends its name. It's probably hidden somewhere in the Network Auto-Magic configuration.

Conclusion

That was a bit convoluted but the excellent resources that are the O'Reilly DNS and BIND book and Adam's article made it significantly easier. Real system administrators would say that was a doodle and made way too easy by Ubuntu. I've learnt useful stuff on the way and I now have a good use for this old workstation. Speaking of which, it hasn't really been breaking a sweat so far: it's been close to 100% idle all the time, it has 114Mb RAM free out of 256 and the hard disk has seen virtually no activity. In other words, my 8 year old box is over spec'ed for this and I could get it to do a lot more server tasks. Should I mention that the server version of some other recent operating systems that shall remain nameless would not even start on such a machine? No, I'll leave that debate for another day.

Sunday, 28 October 2007

From Feisty to Gutsy

Ubuntu released the version 7.10 of their operating system 10 days ago, code named Gutsy Gibbon. As my laptop was on the previous release (Feisty Fawn, 7.04), when I booted it yesterday the install manager suggested I upgrade. I decided to do so, wondering how long it would take and how complicated it would be, my experience of other operating systems that shall remain nameless telling me that I could be in for the long haul. I shouldn't have worried: in typical Ubuntu fashion, it was dead easy: once I had clicked on the upgrade

button, it did everything on its own, I just had to provide my password so that it could have root privileges. In fact, the only difference with a normal package update was that it took longer and the laptop had to be restarted once at the end of the process in order to boot under the new version.

Needless to say, I was impressed. If only all operating system upgrades could be that easy!

Saturday, 30 June 2007

Charitable Search Engine

A news letter I recently received from the RNLI pointed me to a new search engine, MagicTaxi. According to the home page, MagicTaxi gives 50% of its advertising revenue to charity

. So if you want to donate while you search, point your browser their way.

Cryptic Codes and Canon Lenses

As I have enough camera gear that a standard travel insurance will not cover it, I bought a special insurance just for the camera and associated bits and pieces. They require that I provide serial numbers for every item that is worth more than £200. So when I recently acquired a new EF 15mm f/2.8 Fisheye lens, I decided to add it to the insurance policy and went in search of the serial number. On the box, there is a sticker that specifies a serial number but, at first sight, it looks nothing like any of the codes on the lens itself. To start with, the lens has two codes: a numeric one and an alphanumeric one. Which one is the right one? After some digging on the net, I had the answer, so here's an illustration of it, with a couple of my lenses.

The numeric code is the actual serial number. On the EF 15mm f/2.8 Fisheye lens, it is located on the barrel, near the camera mount. On the EF24-105mm f/4 L IS USM lens, it is located on the bayonet flange.

Serial number on the EF15mm f/2.8 Fisheye and the EF24-105mm f/4 L IS USM

That same number is also available on the box the lens came in. On that box, there is a sticker with a bar code and a couple of numeric codes, with the title Lens No

. At first sight, none of the codes on the sticker seem to really match what's on the lens. But looking closer, it appears that the serial number is the end part of the bottom code. It all makes sense now! What the other codes mean, I don't have the faintest idea.

Lens No

sticker with circled serial number

I also found an explanation for the alphanumeric code that can be found on the lens. This is a manufacturing code. My fish-eye lens has a code that says UV0509

.

- The first letter,

U

, identifies the factory that produced the lens: Utsunomiya, in Japan. - The second letter,

V

identifies the year the lens was manufactured: 2007. - The following two digits identify the month the lens was manufactured: May.

- The last two digits are an internal Canon batch number.

Manufacturing code on the EF15mm f/2.8 Fisheye

So there you go, that's what all those numbers mean on Canon lenses. I can now fill in my insurance policy properly.